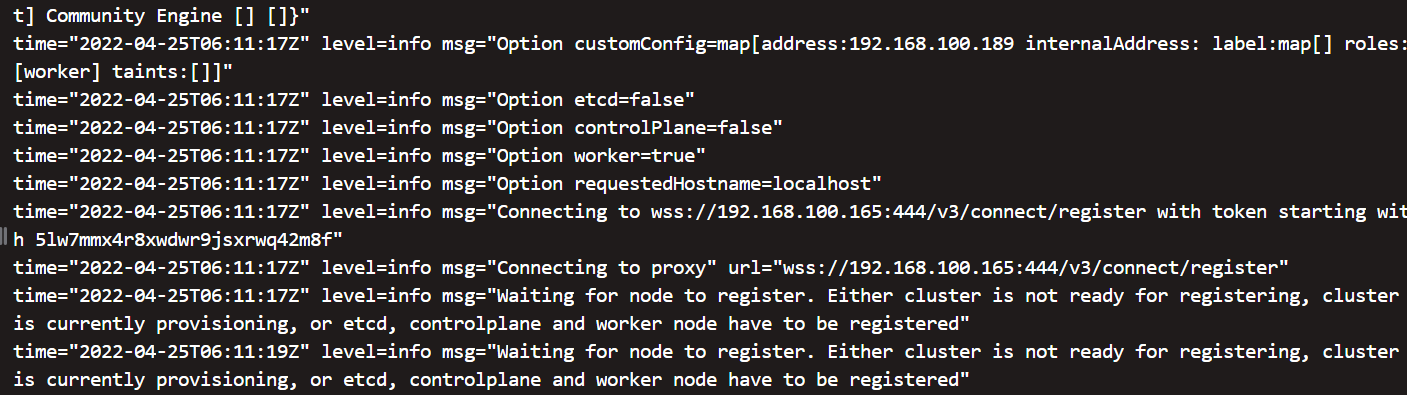

Looking at the rancher/rancher container logs, this comes to the front as a section of the logs that different from when a launch succeeds:ħ 12:25:03 Running kube-scheduler -leader-elect=true -v=2 -address=0.0.0.0 -kubeconfig=/etc/kubernetes/ssl/kubecfg-kube-scheduler.yaml -v=1 -logtostderr=false -alsologtostderr=falseĮ0617 12:25:03.352161 1 leaderelection.go:224] error retrieving resource lock kube-system/kube-controller-manager: endpoints "kube-controller-manager" is forbidden: User "system:kube-controller-manager" cannot get endpoints in the namespace "kube-system" The same setup (done via Ansible) works fine the rest of the time. Periodically (perhaps 1 in 10-15 launches), etcd and controlplance services do not register on this single-node Rancher setup. Launch CloudMan 2 application from (note that this does not happen every time). external DB)Įnvironment Template: (Cattle/Kubernetes/Swarm/Mesos) Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)

Operating system and kernel: ( cat /etc/os-release, uname -r preferred)

Note that it may take up to 10 minutes for the new member to start.Network: bridge host macvlan null overlayĬontainerd version: 4ab9917febca54791c5f071a9d1f404867857fcc | ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | # Verify master host has been added to the etcd member listįrom your Ansible controller node, connect to the first master ocp-master1: Member 81d77724154f987e added to cluster 11eeb64feb9a2071ĮTCD_NAME = "" ETCD_INITIAL_CLUSTER = "etcd-member-ocp-master0=" ETCD_INITIAL_ADVERTISE_PEER_URLS = "" ETCD_INITIAL_CLUSTER_STATE = "existing" Starting etcd client cert recovery agent. Populating template /usr/local/share/openshift-recovery/template/ Local etcd snapshot file not found, backup skipped. assets/backup/Įtcd client certs found in /etc/kubernetes/static-pod-resources/kube-apiserver-pod-8 backing up to. assets/backup/īacking up /etc/etcd/nf to. $ sudo -E /usr/local/bin/etcd-member-recover.sh 10.15.155.210 īacking up /etc/kubernetes/manifests/etcd-member.yaml to. On your Ansible controller node, check the nodes in your cluster: starting kube-controller-manager-pod.yaml $ sudo /usr/local/bin/etcd-snapshot-restore.sh /home/core/snapshot.db $INITIAL_CLUSTERĮtcd-member.yaml found in. If you the try to run oc commands, they will not respond: If you lose (or purposely delete) two master nodes, etcd quorum will be lost and this will lead to the cluster going offline. Ocp-master2/assets/backup/etcd-ca-bundle.crt Ocp-master2/assets/backup/etcd-client.crt Ocp-master2/assets/backup/etcd-client.key Ocp-master2/assets/backup/etcd-member.yaml Ocp-master0/assets/backup/etcd-ca-bundle.crt Ocp-master0/assets/backup/etcd-client.crt Ocp-master0/assets/backup/etcd-client.key Ocp-master0/assets/backup/etcd-member.yaml Ocp-master1/assets/backup/etcd-ca-bundle.crt Ocp-master1/assets/backup/etcd-client.crt Ocp-master1/assets/backup/etcd-client.key Ocp-master1/assets/backup/etcd-member.yaml

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed